Humans have always been fascinated with control. The secrets that allow us to successfully wield the forces of nature for our purposes have been the subject of research in a variety of fields. In engineering, dynamic control became a popular discipline around the turn of the 20th century with the invention of airplanes. In order to keep machines up in the air, continuous, reliable control was crucial. Flight is still one of the dominant areas where control theory is applied, but there are numerous other applications that require well-designed control systems. Machinery, robots, electronic power systems, and fluid flow systems are just a few of them.

Any system consisting of a process and actuator (otherwise known as a plant) is governed by natural laws which can be modeled by a system of equations. Changing an input of the plant leads to a change in the output.

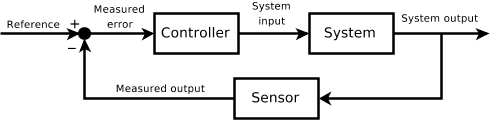

If we want the output to be equal to a certain value, then we need a feedback loop from the output that we can compare to some desired reference value which serves as our input. Most often, the feedback loop is a negative feedback loop, meaning that the measured output is subtracted from the reference value. This results in a small error that we can use to make proper adjustments to an input so that we can get the desired output.

Why do we want to do this? Well, in the real world, there are disturbances that can unexpectedly affect whatever it is we want to control. This can be something like a strong gust of wind for airplanes or a power surge for power supplies.

But the negative feedback may not be enough. Simple control algorithms can help improve the dynamic response of the system. The most popular is the PID-controller. PID stands for Proportional, Integrative, Derivative. Depending on its design, the controller improves the stability and response when there is a change in the reference value, as well as reduces the error between the reference and the output (also known as the steady-state error).

The system of equations used to model the system can also get very complicated and nearly impossible to solve. In order to simplify the model, we may have to make assumptions or eliminate variables that we think will have a negligible effect on the output. This means that a model which we can work with is typically not a perfect representation of the real world. That’s okay though, because the purpose of the model is not to perfectly predict what will happen in the physical system, but to understand the basic relationship between input and output variables.

A perfect model wouldn’t be very useful anyways, because real world components are also not perfect. In electrical engineering, we assume that general, inexpensive components have up to a 10% error, and every component is slightly different. That means that when we build our products, no two devices will be the same, and the control parameters of each device will have to be slightly different. During development, the control parameters of our PID-controller need to be tuned in order to ensure an optimal control for the device.

In summary, a control system has a feedback and a control algorithm. The basic design steps are:

Create a model of the system

Find the relationship between input and output variables

Design a suitable controller

Tune the controller.

Depending on the complexity of a project, this process can take months to implement a prototype, and years to develop and test for robustness.

What I provided here were the rudimentary basics of control design without explaining the actual math involved in creating the models for the plant or the controller. In Part 2 of this post, I will contrast the models used to create robust controllers with the modeling of complex systems typically utilized in economics, climate science, and epidemiology.

This is Part 1 of a two part series.